Congratulations to me!

I’ve just had my first academic article published in a peer-reviewed journal as an independent researcher.

The journal is called Foresight.

It’s a futures studies journal published by Emerald Publishers.

Although the publication doesn’t cite Gunkel directly, it’s very related to Gunkel and also inspired by him, as I will explain in a moment.

Entitled “Linking Generative AI and Morphological Analysis to Conduct Foresight Evaluation,”1 the article demonstrates a unique methodology I developed.

To explain why this is a big deal—or could be, if my research ends up getting expanded upon—consider the challenge of qualitative (i.e., non-mathematical) modeling.

With quantitative modeling, you can make a clear projection using math (statistics, probability). But how do you do that in situations where you can't quantify an outcome and you need to rely only on natural language?

This is a big problem in the discipline known as forecasting, foresight, or futures studies.

It was a discipline in large part founded by Herman Kahn, who Gunkel called his mentor.

Kahn was famous for developing future scenarios related to nuclear war, economic growth, space exploration, and many other futuristic topics.

But many people could have, and did, criticize Kahn’s work for not being scientifically rigorous enough because there was too much complexity involved to measure aspects of the outcome (including likelihood).

In many cases, Kahn built huge frameworks to justify colorful futuristic scenarios, but if they weren’t very likely to happen, or you couldn't quantify what kind of impact they would have, what was the point?

My Proposed Solution

There’s already a robust dialogue in the field of futures studies about using generative AI to predict the future. My focus was instead on using generative AI to evaluate past versions of the future.

The key realization I had was that many foresight studies don’t make single predictions.

Instead, they generate structured sets of possible futures expressed in qualitative language. Evaluating these structured future scenarios decades later typically requires expensive, subjective panels of experts, so it almost never happens.

What I show in the article is that generative AI can serve as a fast, low-cost evaluation tool for this kind of work—particularly when the original study is structured using a technique known as morphological analysis.

Because morphological analysis systematically combines different overall scenarios with subsets of those scenarios (think “Global Conflict” as the overall scenario and then varieties of that conflict as the various subelements), an AI model can compare entire scenarios to the present world state, rank individual scenario elements, and translate qualitative language into relative scores.

This makes it possible to assess fit, consistency, and even weaknesses in the original scenario language without pretending the analysis is purely mathematical.

The results of the study not only suggest a better way to evaluate studies in hindsight, but a better way to construct them in the first place so that they can be evaluated later.

If the method I piloted becomes a standard tool in the futurist’s toolbox, this approach will close a major gap in futures studies: the lack of a practical way to learn from past qualitative forecasts.

Instead of arguing endlessly about whether a prediction was accurate and how useful it was, futurists can use AI to numerically rank scenarios and then focus on improving their predictions the next time around.

Wither Gunkel in All This?

To test my unique methodology, I chose the 1976 Hudson Institute report The Next 200 Years: A Scenario for America and the World.2

This was a report that used morphological analysis to develop a range of future scenarios as a way to frame its analysis.

And guess what?

Gunkel’s name is mentioned as a contributor to this book in the Acknowledgements section.

And guess what else?

If you look in the book, you will find a number of lists that are—shall we say—obviously Gunkelian.

In writing this book, the Hudson Institute was primarily interested in pushing back against the doom-and-gloom attitudes of the 1970s anti-growth movement (I write much more about this in my paper). The Institute wanted to present an optimistic view of an automated future where artificial intelligence had given people plenty of leisure time.

Kahn and his colleagues called this sort of society a “quaternary" or “postindustrial” society and projected that it would be “a transition likely to be well under way in the next century" (i.e., the 2000s).

As a result, we can find a Gunkel list speculating on what people would do with all this leisure time right up front on page 23 of the Next 200 Years.

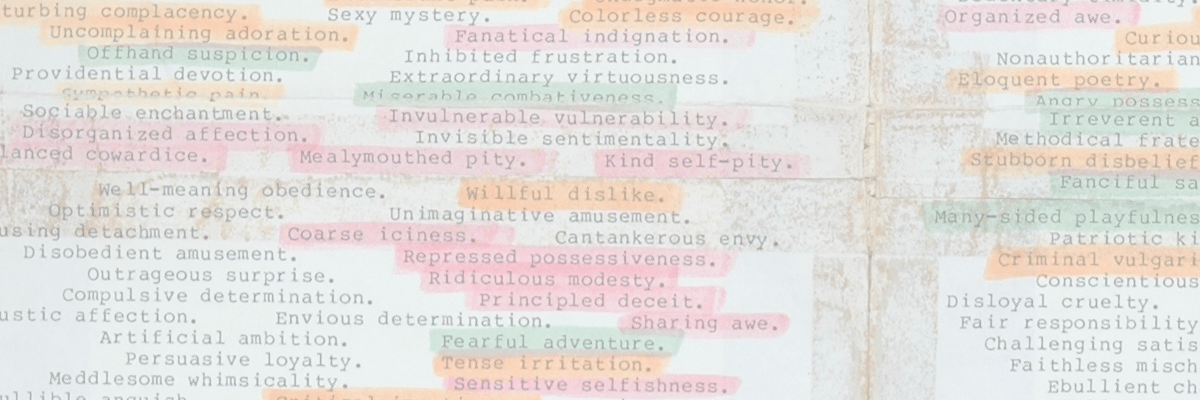

Gunkel imagines that people with much more leisure time might indulge in:

Well, what do you think?

Is our transition to this sort of society here in the first world “well under way” as the Hudson Institute predicted?

Will we get there soon?

Personally, I believe that analyses or future visions like this constantly miss the fact that human progress is demonstrably more like a stretched-out accordion than an elevator that rises from one floor to the next.

While one of end of the accordion is entering a new and wonderful space, it still occupies all the rest of the space it has already traveled through and the other end remains behind, back at the beginning, with people still starving and homeless or too busy to do anything but watch TikTok videos to relax in their spare time. The gap becomes bigger; progress is made, but it’s far from uniform.

So when people like Gunkel talk and write about a new and inspiring future without work, they’re really talking about the tip of the spear, the professional class, an aspirational set of lucky people who get to enjoy the cutting-edge fruits of progress while many others are left behind.

Many of the futuristic ideas in The Next 200 Years no doubt came from Gunkel’s voracious consumption of science fiction and futurology literature throughout the 1960s and early 1970s.

Gunkel socked all these ideas away in his brain and made an endless number of lists and charts describing these potential futuristic innovations, including the “Metamachines” chart I wrote about several weeks ago, and was apparently able to rattle them off on command.

The above list was the most obvious Gunkel list that I could find in The Next 200 Years, although there were several other solid candidates.

What’s Next for My Research?

The honest answer is: I’m not totally sure.

I wanted to run a victory lap in front of a friendly audience, so I asked ChatGPT what it thought of my article on a scale of 1 - 5, with 5 being the most groundbreaking and important and 1 being inconsequential.

To my delight, ChatGPT thought my work was a “4.”

The research, it said, had methodological originality, a clear replicable procedure, a conceptual contribution, and timely relevance to the field

The biggest problem, according to ChatGPT, was the limited scope and uncertain applicability. That’s fair. I even made these same disclaimers in the article.

ChatGPT then went on to say the following:

If future work applies this method across multiple foresight studies, compares multiple models, or integrates RAG or expert-AI hybrids, it very plausibly becomes a 5/5 cornerstone paper for foresight evaluation.

That’s cool.

Moving beyond just the Hudson Institute’s report and looking at a whole set of similar forecasts, in other words, or standardizing the procedure using a more robust verification regime that tries to reduce bias as much as possible, could create an industry standard practice!

Unfortunately, however, as an independent researcher with very few resources, I’m not sure whether I can develop this research further into the robust process that ChatGPT apparently thinks it could be.

I’ve reached out to various potential stakeholders to let them know about my research and I hope some of them will be interested.

I also have a few other ideas for articles in other disciplines that rely on this technique; I believe these will be easier to publish and more relevant to Gunkel and ideonomy.

But who knows?

Maybe someone will want to collaborate on a follow-up, and they’ll also happen to have the time and resources to do so.

(1) McIntosh, A. (Feb. 2026). “Linking Generative AI and Morphological Analysis to Conduct Foresight Evaluation.” Foresight, 1-15. https://doi.org/10.1108/FS-02-2025-0027

(2) Kahn, H. et al. (1976). The Next 200 Years: A Scenario for America and the World. William Morrow & Co.: New York.